Projects

LLM Debate Arena

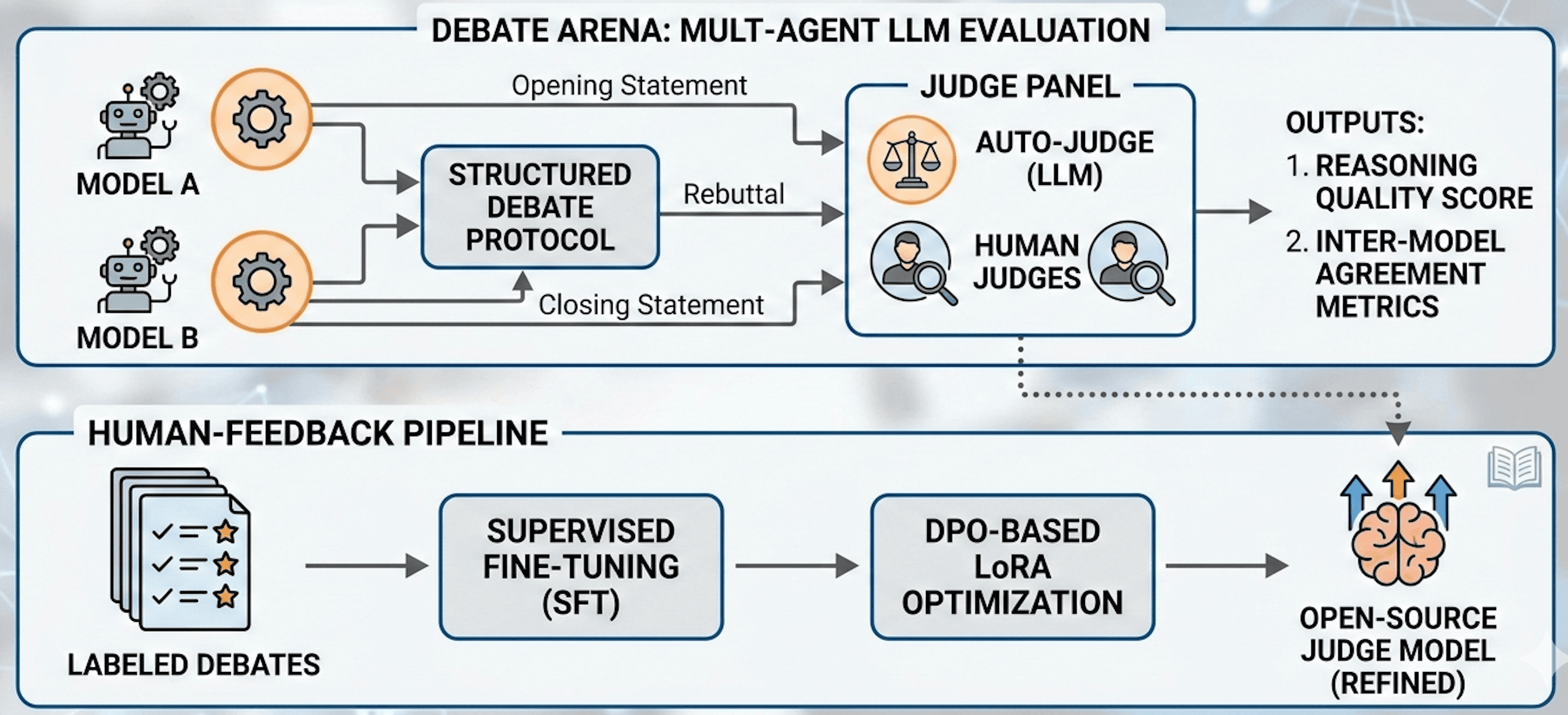

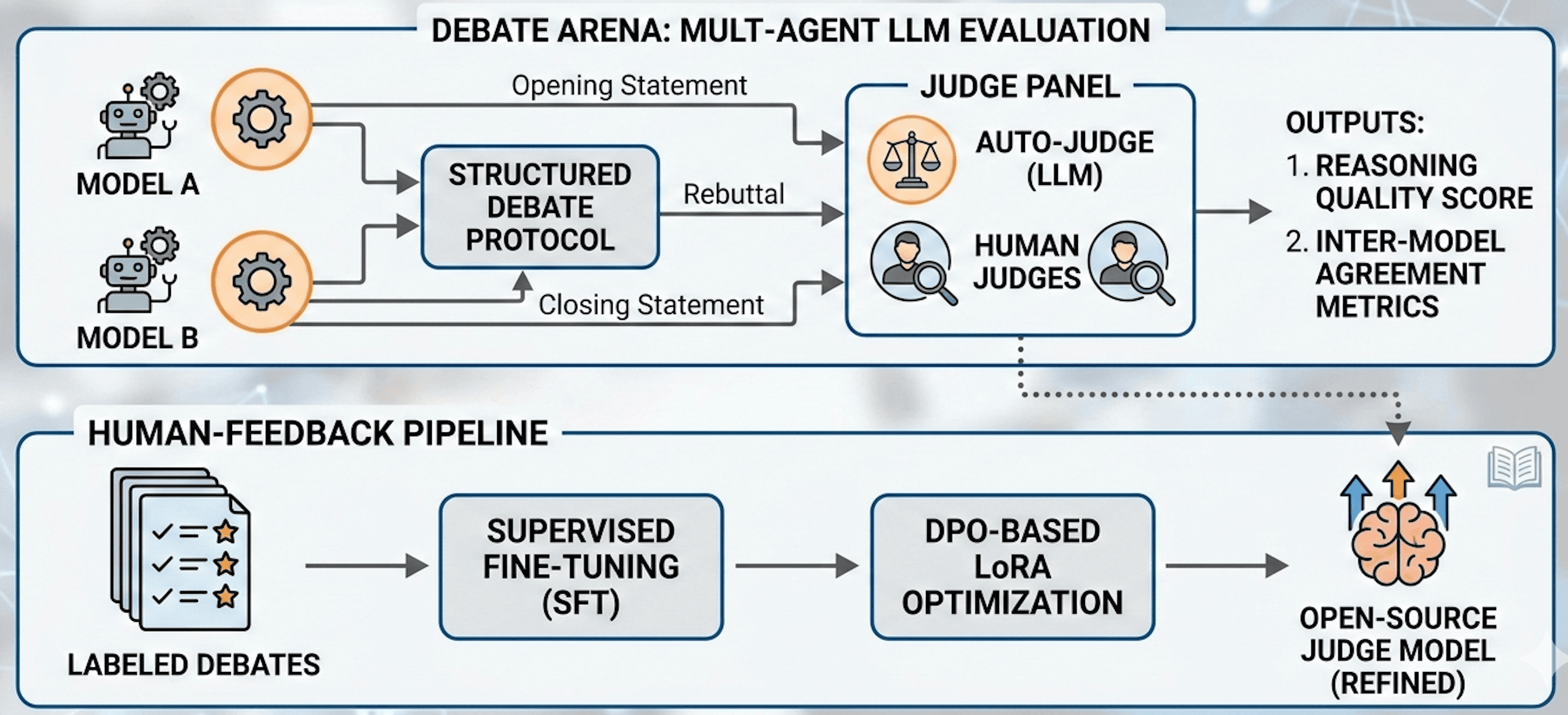

Multi-agent LLM evaluation platform where models debate under a structured protocol and are scored by a judge panel, with a built-in human feedback loop to fine-tune and optimize open-source judge models using LoRA.

Multi-agent LLM evaluation platform where models debate under a structured protocol and are scored by a judge panel, with a built-in human feedback loop to fine-tune and optimize open-source judge models using LoRA.